Why AI’s Biggest Blind Spot In Pharma Isn’t Technical

This article was originally published by Anna Forsythe in Forbes on 03 March 2026. I spend a lot of time working with artificial intelligence, and I am constantly struck by a contradiction: On one hand, AI has become remarkably fluent. It can summarize dense material, surface insights from massive datasets and produce text that looks confident enough to pass for expertise. On the other hand, in some of the places where accuracy matters most, AI remains almost entirely clueless about real decision making. Pharmaceutical evidence generation is one of those places. A Systemic Blind Spot Despite years of excitement about AI in healthcare, there are still no widely used systems that autonomously maintain living, submission-ready evidence for regulatory or reimbursement purposes. This is often framed as a technology gap. In my experience, it is much more than a gap; it is a systemic blind spot not easily remedied. It exposes a misunderstanding of what regulated evidence actually is and what today’s AI systems are built to do. Systematic literature reviews are not summaries. Nor are they answers to questions posed after the fact. They are strictly governed by carefully regulated processes. They begin with predefined protocols, require transparent inclusion and exclusion logic, and must withstand scrutiny long after the original analysis is complete. Regulators and payers do not just ask what conclusions were reached. They ask how those conclusions were formed, what was excluded along the way and whether the same decisions would be made again if the review were repeated. They need to understand how those decisions were made. This is where conversational AI systems struggle in ways that cannot be fixed with better prompts or larger models. Large language models are optimized for plausibility, not traceability. They are designed to produce likely responses, not to preserve the reasoning trail behind each decision. While they can describe evidence convincingly, they cannot reliably explain why one study mattered more than another, or why a marginal trial was excluded at a particular point in time. When the literature updates—as it constantly does in oncology—those inconsistencies compound. I have seen teams experiment with using chatbots to “speed up” evidence reviews, only to discover that what looks efficient at first quickly becomes indefensible under scrutiny. The problem is not that the chatbot models are unsophisticated. It is that the task itself—the special sauce behind the systematic literature review—is not generative in nature. That special sauce needs to be procedural, auditable and accountable. At the same time, the pressure to maintain living, constantly updated evidence has never been higher. Clinical data no longer arrives in neat cycles. New trials appear between guideline updates. Regulatory decisions shift comparators. Payers ask questions that did not exist when the original review was written. Static evidence simply cannot keep up. This has created a strange stalemate. Fully manual processes are too slow and resource-intensive. Fully automated ones are not trustworthy. Many organizations quietly accept the friction, even as they invest heavily in AI elsewhere. A Change In Design What ultimately breaks that stalemate is not better AI, but a different way of designing work. In regulated environments, the only approach that scales is human-in-the-loop intelligence. Machines do what they are good at—continuous surveillance, structured extraction, pattern detection—while humans retain ownership of judgment, interpretation and accountability. When designed properly, this does not slow teams down. But it does change where expertise is applied. What surprises many leaders is that this challenge is not unique to pharma. Several years ago, I had a conversation with an executive in commercial aviation who described a similar tension. Modern aircraft are astonishingly automated. They can take off, navigate complex airspace and land with minimal human input. Yet aviation has never tried to remove pilots from the cockpit. In fact, as automation has increased, pilot training has become more rigorous, not less. The reason is trust. When something goes wrong at 35,000 feet, no one accepts “the system thought it was likely” as an explanation. Automation is expected to assist, not absolve. Human oversight is not a fallback; it is part of the system’s credibility. Evidence generation works the same way. Regulators do not reject AI because it is new. They reject opacity. They expect to see where judgment was applied, and by whom. Systems that blur that boundary undermine trust, even if their outputs look impressive. What’s Holding Organizations Back? What ultimately holds organizations back from building these hybrid systems is rarely technology. It’s culture. Most companies are still organized around projects with defined endpoints, not living assets that require continuous stewardship. Evidence is treated as a document to be delivered, not an infrastructure to be maintained. AI initiatives are evaluated on novelty and visibility, not on whether they quietly reduce friction year after year. Changing that requires leadership restraint. It means resisting the temptation to deploy tools that demo well but cannot be defended later. It also means investing in governance, workflow redesign and cross-functional ownership—none of which make headlines, but all of which determine whether AI creates real value. The most important lesson I have learned working at the intersection of AI and regulated decision making is this: Fluency is not the same as reliability. The organizations that succeed with AI will not be the ones that generate the fastest answers, but the ones that can explain and stand behind those answers when it matters. In pharma, as in aviation, intelligence is only as valuable as the trust it earns. And that means human beings in the chain of command.

Pharma’s Biggest Missed AI Opportunity Is Living Evidence

This article was originally published by Anna Forsythe in Forbes on 29 January 2026. At this year’s J.P. Morgan Healthcare Conference, the largest healthcare investment symposium in the industry, it is no surprise that artificial intelligence featured prominently across a wide range of discussions from drug discovery, target identification and molecule design to clinical trial optimization and operational efficiency. AI applications are now fully embedded in each of these core pharmaceutical R&D strategies. What was far less visible, however, was the role AI could play in the systematic evaluation of scientific literature that underpins nearly every strategic, regulatory and reimbursement decision in modern pharma—or evidence generation. This omission is notable, in fact critical, at a time when AI-assisted evidence generation represents one of the industry’s most immediate and measurable opportunities for return on AI investment. Where AI Is Being Applied Today Current AI adoption in pharma tends to focus on highly visible areas closely associated with innovation, such as accelerating discovery timelines, improving trial execution and supporting internal productivity. These use cases already demonstrate long-term value and competitive differentiation. Still, the majority of high-stakes decisions in pharma do not hinge on discovery algorithms alone. Instead, they depend, as they have for decades, on structured assessments of existing evidence about disease burden and unmet need, historical endpoints and comparator performance, safety signals and evolving standards of care. These traditional assessments inform decisions ranging from trial design and asset valuation to regulatory strategy and pricing. Despite their importance, evidence workflows remain largely manual and highly fragmented. Navigating Using Outdated Maps A useful analogy is navigation. When trying to reach a destination, no one relies on an outdated static map (remember MapQuest?) printed years ago. Roads change, traffic patterns evolve and more efficient routes emerge constantly. Modern navigation relies on GPS systems that update continuously and reroute in real time. Pharma, however, still navigates critical decisions using static evidence reviews. Systematic Literature Reviews (SLRs), which have long been the gold standard for evidence synthesis, continue to be conducted as project-based exercises. This one-off approach is expensive and time-consuming, and the results are quickly outdated as new publications appear, guidelines are revised or new therapies enter the market. Once completed, these product-based exercises often live in disconnected siloes, requiring tweaking or partial reconstruction to support the next decision In a scientific environment that evolves daily, this reliance on static evidence is an increasingly poor and outdated solution, especially at a time when living, continuously updated maps offer a cost-effective solution. Increasing Regulatory And Reimbursement Pressure The limitations of static evidence are becoming more consequential as medical reimbursement systems evolve. In the United States, Medicare price negotiations are now in their third cycle under the Inflation Reduction Act. Medicare Part B drugs in oncology, for example, once largely insulated from pricing negotiations, are now fully in scope as of 2026. Manufacturers are expected to justify pricing not only based on evidence available at launch but also relative to new comparators and changing standards of care that continuously emerge over time. In Europe, the Joint Clinical Assessments (JCA), designed to create a unified, cross-national analysis of the efficacy of new drugs, raise needs and expectations further. Companies must consider all relevant comparators across all EU member states, address multiple subpopulations and present comprehensive, transparent evidence syntheses that can withstand scrutiny across multiple jurisdictions. In both settings, evidence is no longer assessed at a single point-in-time. At a time when regulatory and reimbursement demands are continuously being re-evaluated, conventional static snapshots struggle to keep pace with these demands as they evolve. The Cost Of Fragmentation Despite this pressure, evidence generation in pharma remains highly fragmented. Different functions (R&D, regulatory, health economics, market access, commercial) often commission their own literature reviews for similar questions. Reviews are modified, repeated and localized across regions, frequently by different external vendors and internal teams. Assumptions diverge. Institutional knowledge is lost. Redundancy accumulates. That redundancy is costly. A single high-quality SLR routinely costs six figures and takes months to complete. For global organizations with large portfolios, the cumulative cost of duplicated effort is substantial. More importantly, fragmented evidence increases the risk of inconsistency at moments when alignment matters most. Why General-Purpose AI Falls Short Generative AI tools like ChatGPT and chatbots are often cited as a solution. While useful for summarization or exploration, they are not designed to produce regulatory-grade evidence. Regulatory and reimbursement decisions require predefined methods, transparent inclusion criteria, traceable citations, reproducibility and alignment with established systematic review standards. Outputs must be auditable and defensible. General-purpose AI systems prioritize fluency over traceability and cannot replace structured evidence synthesis. In low-risk settings, speed may outweigh rigor. In regulated environments, rigor is non-negotiable. The Case For Living Evidence The alternative is a shift from static reviews to living evidence. A living evidence approach treats evidence as shared infrastructure rather than as a series of isolated projects. Evidence is continuously updated as new data emerges, centrally governed, and organized by indication, population, comparator and endpoint. Updates are incremental rather than repetitive, and changes are transparent. Functionally, this mirrors how GPS systems work: always current, responsive to new information and capable of supporting multiple routes and decisions from the same underlying map. Such an approach could support better decision-making across the product life cycle, reduce duplication and improve consistency under increasing regulatory and reimbursement scrutiny. Why The Shift Has Been Slow If the potential benefits are clear, why has adoption been limited? One reason is organizational structure. Evidence budgets are typically allocated by function, by brand and by project. Living evidence, by contrast, is shared longitudinally and is cross-functional. Adoption requires investment at an enterprise level rather than ownership by a single team. Living evidence is also, by its nature, less visible than discovery breakthroughs or novel technologies. Yet visibility and return are not the same. As AI continues to reshape pharma, the most impactful opportunities may lie not only in discovering new drugs faster, but in navigating the increasingly complex evidence landscape more intelligently. In an industry under growing pressure to

Why Chatbots Aren’t Enough In Oncology

This article was originally published by Anna Forsythe in Forbes on 13 November 2025. In the fast-moving world of oncology, clinical decision making has never been more complex—or more urgent. Thousands of new cancer studies are published every month, each with findings that could alter treatment pathways or reshape guidelines. For oncologists, research teams, hospitals and payers, the challenge isn’t simply finding information—it’s finding the right information, quickly and confidently. The market is full of AI-powered tools promising help. Many rely on large language models (LLMs) and chatbot-style interfaces that offer answers in conversational form. The appeal is obvious: type in a query, get an instant response. But in oncology—where the stakes are measured in survival rates—ease of use is not enough. Why Decisions Are So Complicated Consider a patient with late-stage lung cancer whose tumor harbors a rare genetic mutation. This is the reality of modern oncology, which offers targeted therapies for specific genetic mutations. The physician must weigh the disease stage, prior therapies, co-morbidities and preferences. They must verify whether a targeted therapy exists, check FDA approvals, review guideline recommendations and explore whether a clinical trial could provide access to the latest investigational drug. This involves combing through journal articles, conference abstracts and regulatory documents—each a piece of the puzzle. There is no “one-size-fits-all” solution in an era where targeted therapies produce individualized pathways. A chatbot might return a single response based on an editorial or opinion piece it “remembers,” presenting it as definitive. The nuance—say, that another trial showed limited efficacy in heavily pre-treated patients, or that guidelines recommend a different approach after immunotherapy—can easily be lost. The Gold Standard: Systematic, Comprehensive, Expert-Vetted Medicine relies on the hierarchy of evidence. At its peak sit systematic reviews and meta-analyses—studies that evaluate and synthesize all available research. Regulatory agencies like the FDA, as well as organizations such as the American Society of Clinical Oncology (ASCO) and the National Comprehensive Cancer Network (NCCN), have long required systematic reviews as the foundation for guidelines and approvals. An effective oncology decision support tool must therefore also be systematic, with transparent, reproducible searches of all relevant research. It must be comprehensive, drawing from peer-reviewed journals, guidelines, conference abstracts and regulatory filings. It must be robust in distinguishing between high-quality randomized trials and weaker evidence. Just as importantly, it must update continuously (ideally daily) to reflect the latest research. Medical decisions based on outdated knowledge risk outdated care. Trained oncologists and other specialists can ensure the conclusions are accurate. Where Chatbots Fall Short I’ve found that even the most advanced LLMs cannot meet those criteria. Their weaknesses are structural. Built for speed and limited in transparency, chatbots rarely disclose their sources. They may omit references entirely, and without systematic searching, key studies are often missed. Their datasets often exclude recent guideline updates or pivotal conference results. Moreover, as black boxes reliant on opaque algorithms, chatbots provide no evidence grading. An editorial can appear with the same weight as a phase three trial. They may even fabricate references—so-called and largely reported on “hallucinations.” In my experiments, queries have sometimes led to outdated and false information. In one instance, a chatbot cited a non-existent study to me. Transparency of the dataset is critical, especially in a field where thousands of new studies are published each month. Using AI on an iPhone to call a taxi is convenient, but in oncology, where each decision can alter survival, these shortcomings aren’t just inconvenient; for a patient with a rare mutation, it can mean the difference between hope and harm. Beyond Oncology: A Universal Lesson The risk of relying on incomplete or unverified evidence isn’t unique to cancer care. In finance, successful portfolio managers don’t bet other people’s money on one analyst’s hunch; they use meta-analyses of market data. In aviation, flight safety depends on synthesizing thousands of reports and assessments. No pilot would fly based on a chatbot’s opinion about turbulence. In public health, vaccine rollouts depend on systematic reviews of global trial data, not a handful of preliminary studies. Across industries, convenience cannot replace rigor. The ideal system in oncology—and other data-driven fields—is an expert-driven partner that can provide trustworthy insights. The Human and AI Solution Despite certain limitations in its use within chatbots, the beauty of AI is how it can scan millions of documents in seconds, helping detect patterns and surface relevant studies. With the mountain of data produced every day, that feature is undeniably important. But human experts are needed to bring judgment, clinical context and critical thinking to the mix. I think the winning model is a living systematic literature review (SLR)—continuously updated by AI, structured through reproducible methodology and validated by experts. (Disclosure: I lead an AI-assisted oncology evidence platform this type of approach.) LLMs power today’s chatbots—but they can also hallucinate or misread complex evidence. The approach I champion still uses LLMs, but with continuous expert oversight. Every data point should be verified by trained analysts and clinicians, eliminating hallucinations and ensuring full transparency. That said, I find this hybrid model effective but demanding. It requires capital, expertise and time to build for each cancer type. And even then, people still prefer someone or something they can talk to. The future may lie in combining both approaches—a conversational chatbot connected to a rigorously curated, expert-verified database. But by working to overcome these hurdles, pharmaceutical companies, payers and healthcare networks stand to benefit as much as clinicians. Beyond oncology, systematic, AI-augmented evidence synthesis has the potential to streamline internal decision making, support value-based care initiatives, strengthen negotiations and reduce duplication across research teams. The Bottom Line AI is here to stay, and its potential in healthcare is enormous. But in oncology—and in every field where lives or livelihoods are at stake—it must be deployed with discipline. Chatbots may offer instant, conversational answers, but approachability is not the same as reliability. Anna Forsythe Anna Forsythe, pharmacist & health economist, is the Founder & CEO of Oncoscope-AI

The Danger of Imperfect AI: Incomplete Results Can Steer Cancer Patients in the Wrong Direction

This article was originally published in International Business Times on 09 October 2025. Cancer patients cannot wait for us to perfect chatbots or AI systems. They need reliable solutions now—and not all chatbots, at least so far, are up to the task. I often think of the dedicated and overworked oncologists I have interviewed who find themselves drowning in an ever-expanding sea of data, genomics, imaging, treatment trials, side-effect profiles, and patient co-morbidities. No human can process all of that unaided. Many physicians, in an understandable and even laudable effort to stay afloat, are turning to AI chatbots, decision-support models, and clinical-data assistants to help make sense of it all. But in oncology, the stakes are too high for blind faith in black boxes. AI tools offer incredible promise for the future, and AI-augmented decision systems can improve accuracy. One integrated AI agent increased decision accuracy from 30.3% to 87.2% compared to the baseline of the GPT-4 model. Clinical decision AI systems in oncology already assist in treatment selection, prognosis estimates, and synthesizing patient data. In England, for example, an AI tool called “C the Signs” helped boost cancer detection in GP practices from 58.7% to 66.0%. These are encouraging steps. Anything below 100 percent is not enough when life is at stake. Cancer patients cannot afford to wait for us to resolve the issues these technologies still have. We risk something far worse than delay; we risk bad decisions born from incomplete, outdated, or altogether fabricated information. One of the worst issues is “AI hallucination.” These are cases where the AI has been found to present false information, invented studies, nonexistent anatomical structures, and incorrect treatment protocols. In one shocking example, Google’s health AI misdiagnosed damage to a “basilar ganglia,” an anatomical part that doesn’t exist. The confidently presented output looked authoritative until physicians recognized the error. Recent testing of six leading models, including OpenAI and Google’s Gemini, revealed just how unreliable these systems can be in medicine. They produced confident, step-by-step explanations that looked persuasive but were riddled with errors, ranging from incomplete logic to entirely fabricated conclusions. In oncology, where every patient is an outlier, that margin of error is unacceptable. Even specialized medical chatbots, which may sound authoritative, still present opaque and untraceable reasoning—their sources inconsistent, and their statistics often meaningless. This is decision distortion. The legal and ethical implications are real. If a treatment based on AI guidance causes harm, who is liable? The physician? The hospital? The AI developer? Medical-legal frameworks are scrambling to catch up, with some warning that overreliance on AI without human oversight could itself constitute negligence. The problem of AI hallucination extends beyond the medical realm. In the legal world, AI hallucinations have already led to serious consequences: in at least seven recent cases, courts disciplined lawyers for citing fake case law generated by AI. In one high-profile case, Morgan & Morgan attorneys were sanctioned after submitting motions containing bogus citations. If courts are demanding accountability for AI mistakes in law, how long before the medical malpractice lawsuits start being filed? In oncology, especially, reliance on AI amplifies risk because of how the tools are trained. Many large language models or decision systems depend on fixed journal cohorts or curated datasets. New oncology breakthroughs may remain outside that training collection for months or years. When we query such a system, it may omit the newest trial, ignore emerging biomarkers, or default to an outmoded standard of care. When AI invents studies or hallucinates efficacy, and doctors rely on it, patients pay the price. Moreover, cutting-edge medical data is often fragmented, diversified, and non-standardized; imaging formats differ, electronic health record notes are not uniform, and rare biomarkers may exist only in supplementary data. AI does best with well-structured, consistent data; it struggles with the disorder at the frontier of research. That means decisions about novel or borderline cases may be precisely where AI is least reliable. I’m not arguing that we scrap AI in cancer care. On the contrary, we must keep developing these tools, pushing boundaries, harnessing the power of computation to spot patterns no human sees. But we must not hand over ultimate decision-making authority to them, at least not yet. Cancer patients deserve better than experiments. They deserve human physicians who remain in the loop, who audit, challenge, and interrogate AI outputs. We need an architecture of human and AI collaboration. When a chatbot suggests a regimen, the oncologist should review supporting evidence, check for newly published trials, and confirm that the model’s assumptions match the patient’s specifics. The physician must own the decision. We can establish effective guardrails by implementing regular validation of AI systems with updated clinical data. By promoting transparency in training sources and mandating human review of all AI-suggested decisions, we can enhance overall trust in these technologies. Additionally, developing clear liability rules will help ensure accountability and foster responsible innovation. In practice, that means clinics deploying AI decision tools should monitor AI output, compare outcomes, run audits, and allow physicians to override or correct AI suggestions. We must also push for standardization of data, sharing across institutions, open and timely inclusion of new studies, and rigorous mechanisms to flag contradictions or hallucinations. Without that, the models will always lag the frontier. Cancer patients cannot wait for us to achieve AI perfection. But they deserve the best possible care now, and that requires that we never quit human responsibility in the name of speed. AI must serve as an assistant, not a dictator. Humans are in charge of deliberation and decision-making, and they must always prioritize caution when faced with unverified or ambiguous algorithms. AI chatbots are tools, not authorities. When we start letting algorithms decide instead of doctors, we have crossed from medicine into potential malpractice. Cancer patients don’t need perfect chatbots. They don’t have the time for the technology to catch up, and they cannot afford doctors who make decisions based on incomplete or outdated information. For patients and their families, the stakes are too high, and they deserve a much higher standard of

From Evidence To AI: Why The Future Of Oncology Decision Support Must Be Built On Living Evidence

This article was originally published in Forbes on 18 September 2025. How do oncologists decide which treatment to give their patients? It’s rarely an easy choice. Physicians must weigh multiple levels of information, such as the patient’s disease stage, genetic markers, previous therapies, overall health in general and even personal preferences. Then comes the quest for evidence. In order to validate the optimal way forward, oncologists need not only know what is effective, but also whether it is FDA-approved, guideline-adherent or available through a clinical trial. To find the best, most up-to-date information, that validation typically involves toggling between PubMed, society guidelines, journal notifications and conference summaries, and then rationalizing information that doesn’t always align. All of this is tedious and time-consuming. Time that most oncologists don’t have. In a high-volume clinic, a medical oncologist may see 30 to 50 patients in a day. But even with all of those time pressures, each and every decision should be made with the latest, most complete and scientifically valid evidence available. The stakes are high. With the mountain of new research and evidence published in oncology journals constantly expanding, evidence literally shifts by the day. Those shifts in evidence—the decisions between the right and wrong treatment—can be life or death. The Enduring Value Of Evidence Hierarchies Medicine has long recognized that not all evidence is created equal. A single case report may stimulate ideas, but it cannot guide practice. Observational studies provide associations but not certainty. Randomized controlled trials minimize bias and provide more insight. But at the very top of the hierarchy are systematic reviews and meta-analyses, which combine the entire weight of the evidence. This hierarchy matters because medicine is complicated. If we relied on anecdotes or headlines in isolation, patients would be subjected to treatments that look promising by themselves but prove ineffective or even counterproductive when considered in context. For this reason, organizations from the FDA to WHO mandate Systematic Literature Reviews (SLRs) when shaping guidelines, approvals and policies. Systematic reviews are the gold standard for evaluating medical evidence—the safety net for modern medicine. They prevent us from the risks of cherry-picking studies, overvaluing anecdotes or relying on unverified opinions. The Lure And Risk Of Chatbots Given the deluge of new medical information—and the tedium of just reading it all, let alone evaluating it—it’s no wonder that AI chatbots have captured attention. Faced with information overload, the idea of typing a quick question and receiving a fluent, confident paragraph or two is more than just appealing. It can be viewed as a lifeline for busy oncologists. But that’s where the danger lies. Chatbots don’t conduct systematic reviews. They can’t distinguish between high-quality trials and weak studies. They don’t verify whether a therapy is FDA-approved or buried in an outdated guideline. And in some cases, they even fabricate references, miss key data or rank that data inappropriately. Convenience can be seductive, but in oncology, where the margin for error is minute, the cost of error, or incomplete or inaccurate information, is disastrous. That convenience might be harmless if you’re asking Siri to find the nearest grocery store. But in cancer treatment, the right choice can extend life. The wrong choice can cut it short. Evidence Hierarchies Matter Everywhere The lesson extends well beyond oncology. In cardiology, guidelines for heart failure shift frequently. Missing an update could mean prescribing a less effective therapy. In infectious disease, choosing the wrong antibiotic fuels global resistance—making “tried and true” therapies less potent, and new approved therapies a better solution. Outside of medicine, the same principle holds true. Financial advisors trust portfolio strategies grounded in decades of cumulative analysis, not a single trader’s hunch. Aviation safety regulations are shaped by the aggregation of countless investigations, not anecdotal exceptions. Across industries, systematic, comprehensive evidence beats selective inputs every time. From Static Reviews To Living Evidence If chatbots aren’t the solution, then what is? The answer lies in bringing evidence hierarchies into the era of AI. Imagine a living systematic review in real-time, providing a comprehensive, up-to-date synthesis of the evidence—backed by AI and vetted by humans. Instead of replacing systematic reviews, AI in this new paradigm augments them. Algorithms filter through the sheer volume of new publications, screen for relevance, raise quality issues and update evidence maps in real time. And then experts evaluate the results before they reach the physician’s desktop. This model is rigorous yet addresses medicine’s biggest bottleneck—time. Doctors would no longer be forced to sort through hundreds of studies manually. Instead, they would access a dynamic, physician-ready summary rooted in the totality of evidence. AI does the heavy lifting of scanning and sorting, while human experts remain the arbiters of interpretation. A Human-AI Partnership This combination is the future that I am dedicated to and the foundation of the work that my team is producing. At Oncoscope, we don’t rely on generative AI to spin out answers. Instead, we use a suite of AI models to reproduce and accelerate the standardized steps of a systematic review. Think of it like a symphony. AI can tune the instruments, arrange the sheet music and keep the score updated in real time. But only the conductor—the oncologist—can interpret the music for the audience. This collaboration leverages each party’s strength: Machines are better at speed and repetition, while humans are better at judgment and context. The end product is evidence, both thorough and up-to-date, that doesn’t overwhelm the clinicians who need to implement it. Why Caution Matters Now The enthusiasm around AI in healthcare is understandable. Physicians are busy, patients are better informed than ever and the pace of discovery keeps accelerating. But in our rush to adopt new technology, we risk abandoning the very safeguards that make modern medicine safe. It would be unthinkable to prescribe chemotherapy based on a single press release, yet we risk doing something similar if we accept unverified chatbot outputs at face value. In oncology, where decisions can never be undone, shortcuts are dangerous. Archibald Cochrane, the father

Systematic Literature Review Versus Chatbots: Why In Oncology, It’s Not a Choice

In the age of artificial intelligence, speed is often mistaken for rigor. Nowhere is this more dangerous than in oncology, where treatment decisions can mean the difference between life and death. Some technology companies tout “systematic literature reviews” (SLRs) generated in minutes by chatbots that claim to scan thousands of papers across the internet. The appeal is obvious: quick, accessible, and seemingly comprehensive. But in reality, these outputs are neither systematic nor reliable. For oncologists, payers, and researchers, understanding the distinction between a true SLR and a chatbot’s surface-level search is not just academic—it’s essential. The Gold Standard: What a True SLR Involves Systematic literature review is the gold standard for evidence synthesis in medicine. It is the foundation of evidence-based practice because it minimizes bias, ensures completeness, and enables decisions to rest on the strongest available science. A rigorous SLR begins with a protocol: a predefined roadmap that frames the research question and methods. It requires carefully constructed search strategies, typically using combinations of keywords and controlled vocabulary, to capture every relevant publication across peer-reviewed databases. The process doesn’t stop there. Grey literature—such as abstracts from scientific conferences—must also be included, since cutting-edge oncology data often appears in congress presentations long before it reaches a journal. From there, studies undergo multi-step screening against strict inclusion and exclusion criteria: patient population, interventions, comparators, outcomes, and study design (the classic PICO framework). Each selected paper is then critically appraised for quality and relevance. Only after this painstaking filtering does the work of synthesis and interpretation begin. This is not a clerical exercise. It requires advanced training, sound judgment, and clinical insight to evaluate conflicting results, contextualize findings, and translate them into actionable conclusions. Why Chatbots Fall Short Chatbots, even those powered by large language models (LLMs), cannot replicate this process. At best, they skim unstructured text. At worst, they hallucinate citations or omit critical studies. They lack protocols, inclusion criteria, appraisal of study quality, or a transparent audit trail. What results may look convincing on the surface—but lacks the depth and reliability required in oncology. When a chatbot says it can “review 1,000 studies in seconds,” what it’s really doing is producing a text summary based on whatever sources it happens to ingest. There is no guarantee that the sources are peer-reviewed, complete, current, or even real. That is not an SLR. Why It Matters in Oncology Oncology is not forgiving of shortcuts. Selecting the right therapy for a patient is an exercise in precision: choosing between regimens, sequencing targeted therapies, balancing efficacy and toxicity, and staying current on breakthroughs that can extend survival or improve quality of life. In this context, incomplete, outdated, or fabricated evidence isn’t a minor flaw—it’s a threat to patient safety. The rigor of a systematic literature review is not a “nice to have”; it’s the foundation for making responsible decisions in cancer care. The Path Forward AI absolutely has a role to play in evidence synthesis. When paired with human expertise and transparent methodology, it can accelerate searches, streamline screening, and reduce administrative burden. But AI must serve the process—not replace it. In oncology, the choice isn’t between a chatbot and a systematic literature review. It’s between cutting corners and saving lives. The stakes are too high for anything less than living, rigorous, and human-guided evidence. Anna Forsythe Anna Forsythe is the Founder and President of Oncoscope-AI, the first platform to bring together real-time oncology treatment data, clinical guidelines, research publications, and regulatory approvals — all in one place, just like Expedia for cancer care. Available free to oncology professionals worldwide, Oncoscope-AI is redefining how cancer care information is accessed and applied.

From Cochrane To Chatbots: Why Evidence Matters Now More Than Ever In The AI Era

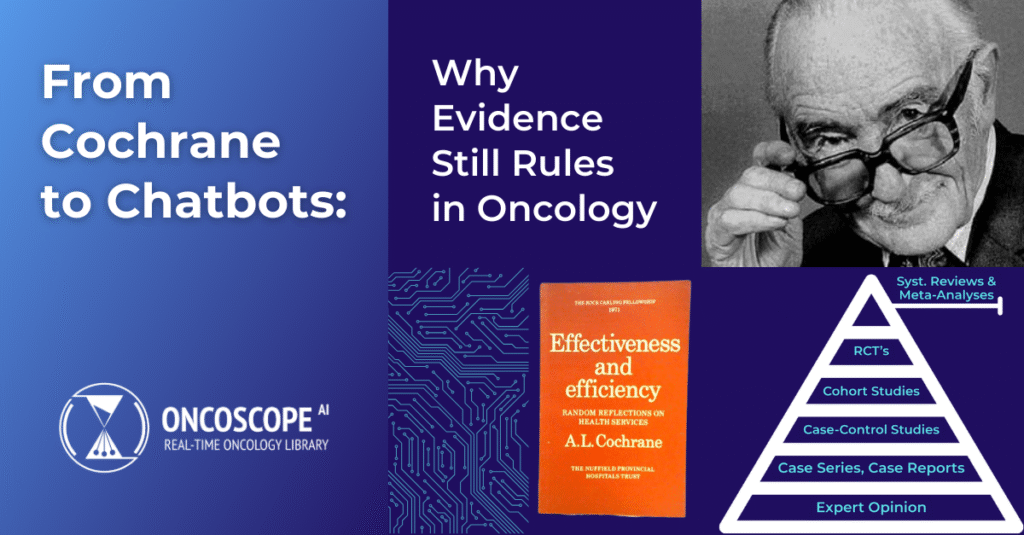

This article was originally published in Forbes on 02 September 2025. In oncology, discovery is moving at warp speed. Hundreds of new studies are published each week, sometimes more than a dozen in a single day. For patients and providers, this fire hose of information could equate to life-saving breakthroughs—a new biomarker, a novel dosing schedule or a survival-prolonging treatment. But it also equates to risk. Misinterpret a flawed trial, overtrust anecdotal experience or trust the wrong person, and patients are left at the mercy of ineffective or even harmful treatments. And so, as this deluge of data overwhelms us with such velocity, the imperative is ensuring that decisions are well-informed by precise, whole and transparent evidence. That all starts with one man: Archibald Leman Cochrane. Archibald Cochrane: The Original Evidence Disruptor Archibald Cochrane was a British physician and epidemiologist who served as a prisoner-of-war doctor in World War II. With effectively no medicines at his disposal, he watched patients suffer because care was based more on habit than proof. After the war, he became an outspoken advocate for a revolutionary principle: Medicine must be based on evidence that has been tested and proven, not on practice or expert opinion. In 1972, he published Effectiveness and Efficiency: Random Reflections on Health Services, a text that shook the medical establishment through criticism of its reliance on anecdotes. He argued that randomized controlled trials (RCTs) were required to determine whether treatments were effective and called for doctors and patients to be presented with objective summaries of all pertinent evidence. His vision inspired the Cochrane Collaboration, founded in 1993, which remains the global leader in producing systematic reviews. Pre-Cochrane, the concept of a controlled clinical trial did not exist. He was the one who established the use of controlled clinical trials, which have now become the gold standard for evaluating new treatments. Building On Solid Ground Cochrane’s work gave us the pyramid of evidence, often used to illustrate the hierarchy of reliability. The higher you climb in the pyramid, the stronger the foundation for life-and-death decisions. Why Systematic Reviews Save Lives In oncology, where treatment options evolve daily, systematic reviews are essential. A single study, whether positive or negative, rarely tells the full story. Systematic reviews, however, consider the entire body of evidence and account for consistency, quality and nuance. Nearly every modern guideline (from the World Health Organization to the FDA) requires systematic reviews as the foundation of clinical recommendations. These methods are necessary because lives depend on them. When Chatbots Pretend To Be Experts We’ve now entered the AI era, where large language models and chatbots can generate fluent answers to intricate medical questions in seconds. To overwhelmed oncologists confronting a deluge of literature, this might look like salvation. But these tools don’t follow the hierarchy of evidence. They don’t methodically scan all the studies, grade the quality of trials or reveal how they arrived at their conclusions. They generate responses based on text patterns that are sometimes accurate, sometimes incomplete and sometimes entirely fabricated. I’ve heard an example just last week from one of my medical colleagues: An AI tool produced a reference under his name for an article he had never written. It was a perfect illustration of how these systems behave like an overeager student desperate to provide an answer, even if that means inventing one. A chatbot’s “best guess” could mean proposing a treatment that introduces unnecessary toxicity to patients or worse, missing a well-documented new trial that would improve survival. The irony is that most of us would never hand over the task of booking a flight to a chatbot without carefully double-checking everything—the departure point, the destination city, the time of day. Yet somehow, when it comes to medical treatment, many are willing to accept incomplete or outdated chatbot outputs at face value. That disconnect should give us pause. Fluency Isn’t Truth One of the greatest risks of AI in medicine is that it sounds authoritative. Chatbots excel at fluency, but fluency is not the same as truth. At this time, AI simply isn’t ready for the weight we’re placing on it. Too many people are so captivated by what it can do that they forget the most basic principle of science and medicine. You must double-check the information. That fascination is dangerous. It encourages blind trust in answers that may be incomplete, misleading or outright wrong. If we allow fluency to masquerade as reliability, medicine risks sliding backward to the pre-Cochrane era, when anecdotes and authority carried more weight than solid data. Smarter Together I’m not saying that AI has no place in evidence-based medicine. Far from it. Let machines handle the tedious but essential work of scanning thousands of papers, formatting endless references and keeping reviews continuously updated as new trials are published. These are the repetitive housekeeping tasks that often slow researchers down, yet must be done with precision. I often compare it to household chores. AI should be doing the vacuuming so humans can spend their time on meaningful work. That’s exactly how my team approaches it. We’ve built a model that uses 36 different AI systems, integrated under the supervision of PhD-level experts, to make systematic reviews dramatically faster without compromising accuracy. Don’t outsource judgment to machines. Give human experts more bandwidth to do what only they can do: Interpret the evidence, weigh the nuances and make the right decisions for patients. Evidence Still Rules Cochrane warned half a century ago that a great deal of medicine lacked solid evidence. His admonition is even more urgent today. With AI, the danger is overconfidence in fluent machines that sound convincing but aren’t built on rigorous evidence. AI must serve evidence, not replace it. Healthcare leaders, policymakers and clinicians need to insist on transparency, rigor and comprehensiveness as absolute necessities. Because in oncology, and medicine in general, lives are at stake. And regardless of how much the tech advances, one thing will always be true: Evidence still rules. This article is

From Cochrane to Chatbots: Have We Forgotten the Evidence?

If medicine had a godfather of evidence, his name would be Archie. Archibald Leman Cochrane, that is. Cochrane wasn’t just a physician—he was a rebel against tradition. In the 1970s, he looked around at the medical world and said, essentially: “Too much of what we do is based on habit and authority, not actual proof.” He proposed a radical idea: let’s rank evidence by reliability. At the bottom sat case reports and expert opinions—interesting, but hardly solid. Above that came observational studies, then randomized controlled trials (RCTs). And sitting proudly at the top of the pyramid? Systematic literature reviews (SLRs): structured evaluations that capture all the studies, critique their quality, and synthesize their findings. That hierarchy became the foundation of what we now call Evidence-Based Medicine (EBM). Why Systematic Reviews Matter Systematic reviews aren’t academic busywork. They’re the reason guidelines from the World Health Organization, NICE, and the American Society of Clinical Oncology (ASCO) are trustworthy. They’re why the FDA demands comprehensive, systematic evidence before approving a therapy. The principle is simple: no single trial tells the full story, but when you put the whole picture together—carefully, transparently, reproducibly—you can make decisions that change lives. But Have We Forgotten Cochrane? Fast forward to today. AI chatbots can answer clinical questions in seconds. They sound authoritative, but they don’t follow Cochrane’s hierarchy. They don’t systematically review literature. They don’t grade evidence for quality. And they definitely don’t show their work. In oncology, where new studies are published daily, this is not a minor issue. A chatbot that casually cites one study—or worse, invents a citation—could mislead a physician into recommending something harmful, or missing a life-saving option. It’s like we built the evidence pyramid over decades, and now, dazzled by shiny AI, we’re forgetting why we built it in the first place. The Way Forward The future of evidence isn’t abandoning systematic reviews. It’s making them living: constantly updated, rigorous, and transparent. AI does have a role to play here—not as a chatbot dispensing unverified answers, but as a tool that accelerates and augments systematic reviews. That’s exactly what we’re building at Oncoscope: living SLRs, human-vetted and PhD-curated, augmented by AI. Reliable, current, and ready to support the most important decisions in oncology. Because in cancer care, evidence isn’t an academic debate. It’s a matter of life, harm, or hope. So the question is: are we going to let chatbots distract us from Cochrane’s lesson—or use AI to fulfill it? Anna Forsythe is the Founder and President of Oncoscope-AI, the first platform to bring together real-time oncology treatment data, clinical guidelines, research publications, and regulatory approvals — all in one place, just like Expedia for cancer care. Available free to oncology professionals worldwide, Oncoscope-AI is redefining how cancer care information is accessed and applied. A clinically trained Doctor of Pharmacy (PharmD), Anna also holds a Master’s in Health Economics and Policy from the University of Birmingham (UK) and an MBA from Columbia University. She previously co-founded Purple Squirrel Economics (acquired by Cytel in 2020) and led Global Value and Access at Eisai Pharmaceuticals, following earlier roles at Novartis and Bayer in clinical research and health economics.